This one is pretty cool! Could you please explain how it was made? Is a single generation or you mix some layers later in post production?)

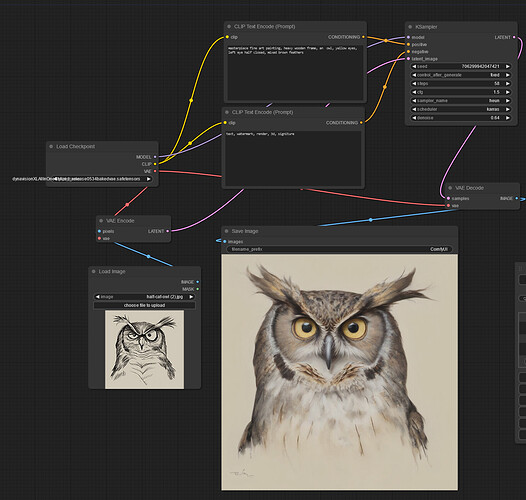

- Initial video to drive the animation made in After Effects

- imported into ComfyUI (a node based environment)

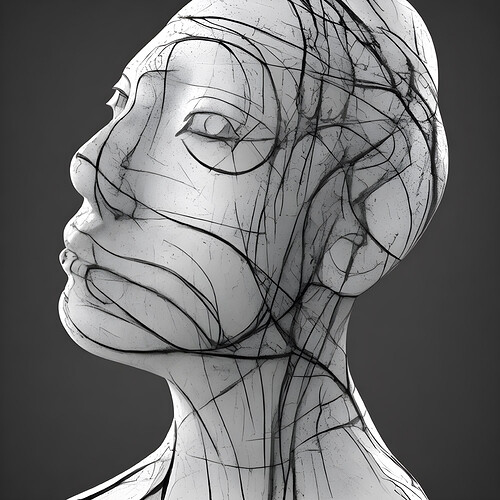

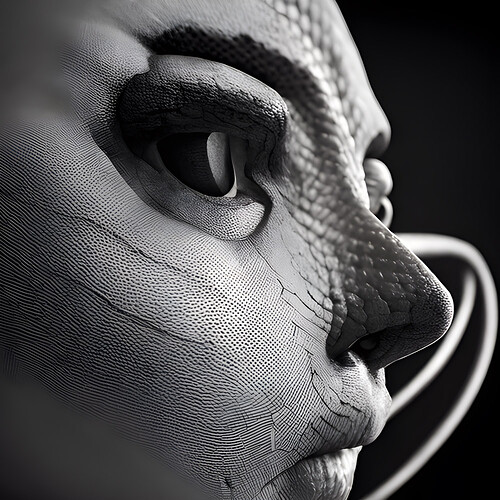

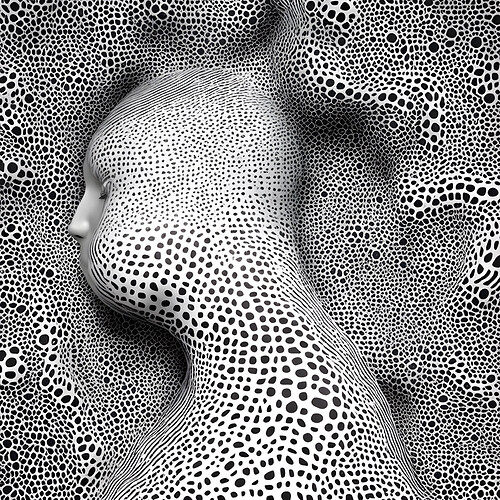

- motion from video is mixed with noise and sent through 2 ‘controlnets’ (soft edge and qr monster), 1 which pays special attention to edges and another which pays special attention to light and dark areas

- look comes from word prompting (which is keyframed so that certain words only hit on certain sections of the animation), negative word prompting and 4 IPAdapters (each differently weighted) that take input images which I’d made previously and try to match the final animation to the style

- there are also LoRAs which I trained myself to further influence the motion and add a specific concept (in this case based on my own film photographs)

- weight and last step of controlnets is keyframed so that their influence varies throughout the animation

- latents are sent through FreeU before diffusion to improve image quality, parameters are dialled in - diffusion steps, sampling type and CFG are adjusted

- when I’m happy with the initial animations it’s upscaled and sent through another diffusion process with fewer steps to add some detail to the larger version

- then it’s run through a frame interpolation algorithm to smooth it out by generating in between frames

- then back into After Effects for colour correction and finalising

- soundtrack made in Logic

That’s the gist of it, I don’t have the Comfy workflow open as I’m in the countryside packing for Glastonbury so might’ve missed something.

Cool, thank you for detailed info! Also trying different ways to improve video generation, doing my first steps and great problem is to make it “stable” and smooth. Of course, I get that the key is finding right workflow. Need to learn more about controlnets and how to use loras… If you can recommend some good learning sources, would appreciate a lot! Watching and reading all I can find, but most materials are a bit too technical and do not cover more advanced techniques with mixing different software to get more artistic results.

The most stable method for vid 2 vid currently is called AnimateDiff, that’s what I used on the animation above.

As for learning resources I’m not too sure as I’ve never really done tutorials but if you want to get into animating in ComfyUI I’d recommend joining the Banodoco discord server, lots of friendly people in there who know their stuff.

Will do so, thanks!

Of course. Obviously making a quick vacation photo with your phone or draw “kindergarten” daisy on a piece of paper doesn’t yet make you an artist, and neither does prompting AI to draw you a person in style of michelangelo.

But what I was saying is, artists making stuff with AI are different kind of artists than painters, just like painters are different than photographers. It’s not about being traditional or not, AI art will likely become “traditional” in the future.

Am I am artist when I take a camera photo of my dog? I would think not so perhaps not all photography is art.

When a musian plays note for note a Bach piece or a cover of Sweet home Alabama is that musian an artist?

Is a chef an artist? When I cook eggs for breakfast am I an artist?

Photography has become ubiquitous but you can still make art with your phone. I don’t even know why people buy cameras except to look like photographers (something someone else wrote recently).

In our sample culture, you can legit take Bach (I’d rather Satie) and sample / loop it with your fingers to make art. No royalties either bc the guys are likely long gone.

As to the art of breakfast. Depends on if you fry eggs in bacon grease or not, and whether you include pancakes.

Exactly, it’s all just a semantic argument on how to define what art is and who is an artist.

Some may be interested in how these (for now) arcane workflows may become more hands on. There is some very interesting primary design research happening on the UI modalities that will bring the kind of fine control people like us will appreciate.

Prompt :

Can you make an image about someone creating a thread on elelktronauts.com, in her studio

Result on July 30, 2024 :

When will Elektronauts add an arranger view?!

Maybe it’s trending topics in a fader design style

But what i do see is it fix my typo i write : elelktronauts

Ai is smart enough to say elektronauts ! haha

But never smart enough to draw the right number of black keys.

Tha fuck…

Be more subtle. You’re giving the money shots away too early in the clip. I’d suggest moving the fully nude breasts more towards the end.

What did you use to create this morphing effect?

looks like we don’t pay for that channel yet.